As explained in chapter 5, the most common type of evaluation process is based on a combination of criteria.

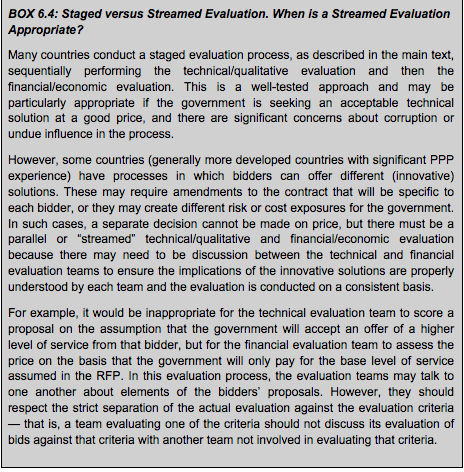

In this context, as introduced in chapter 5, there are two approaches, which may be regarded as good practice: a streamed process and a consecutive or staged approach. Factors relevant to the choice between these approaches are discussed in box 6.4.

When the evaluation process does not allow complete separation of technical/qualitative criteria from financial/numerical criteria, it is paramount (and considered good practice) for transparency purposes to carry out the evaluation in a structured streamed process[9]. In this sense, apart from the administrative conformity/compliance, the evaluation work should be divided into the following concurrent streams (in terms of process management).

- Evaluation of the technical offer and other potential valuation drivers subject to qualitative assessment.

- Evaluation of the economic/price offer and potentially other numerical criteria.

In many jurisdictions, rather than streams, these sub-processes of evaluation will be done consecutively in separate stages. In some cases, this is a legal requirement (prescribed by law, for example, in EU legislation) with the authority obliged to seal the completed technical or qualitative evaluation before opening the financial/economic offer envelope. In many countries, this process occurs in a public venue.

There are different techniques to organize and perform the qualitative evaluation work and to ensure that processes and criteria are applied consistently across bids. For example, having each individual consistently evaluating the same sub-criteria across all bids, having each evaluator assessing one bid under all sub-criteria but then discussing with other specialists the results to ensure consistency, or having multiple evaluators jointly assessing bids against sub-criteria through a consensus process [10].

If the rules of the tender process allow bidders to submit alternative offers along with their primary bid, the evaluation process must identify how each bid (the base bid and the alternative bid) will be treated — for example, by evaluating each of these as separate bids, providing the base bid has met all of the administrative and compliance requirements and the alternative bid has met any requirements set out in the RFP for such bids.

In some projects, the evaluation criteria are such that the evaluation can be enhanced by developing a performance model to aggregate and systematically and objectively assess input data from bidders. However, this requires an up-front investment in development of the performance model, validation that the model correctly links the inputs to the evaluation criteria, and transparency in the process. In some (but not all) cases, the evaluation criteria will be such that the performance model can be developed from the financial model for the project.

Most of the potential approaches to evaluation are valid as long as they respect transparency and fairness, and in this sense they will ensure consistency in the interpretation of the evaluation criteria and sub-criteria. For this reason, as noted, it is good practice to develop a manual for evaluation.

It is important to keep the different elements of the evaluation separated by physical and informational barriers, that is, those involved in the technical evaluation should not have access to details of the financial evaluation and vice versa. This ensures that evaluators’ perceptions are not influenced by aspects of the bid that are not relevant to the specific criteria they are evaluating.

Regarding the financial offer, the evaluation panel will have to consider the consistency and responsiveness of each of the offers (some processes require certain documents to be in the financial envelope rather than in the technical envelope[11]). Analysis of the financial offers can be complex, and it is good practice for the evaluation panel to obtain detailed independent analysis of the financial offers by finance specialists. Time should be allowed for this. The evaluation panel (or the awarding authority) may even reject some offers because they are potentially considered in “temerity[12]”, that is, underbidding too aggressively, or for other reasons described in chapter 5. In some processes the financial offer will be subject not only to quantitative/numerical evaluation, but to some qualitative assessment as well.

Only after this check and definition for responsive offers will it be possible to announce the awardee under the final scoring calculation.

[9] Further reading on evaluation matters may be found in Infrastructure Australia (2011) National Public Private Partnership Guidelines. A discussion on the bid evaluation process can be found in these guidelines in section 12 of the Volume 2 (Practitioners’ Guide).

[10] As introduced in chapter 4, it is not uncommon and may be considered good practice to establish a floor for technical scoring so that no offer with less than x points in technical evaluation (or y points as minimum in some specific sub-criteria) will be qualified. Rather, it will be rejected (and the price or economic offer will also be rejected).

[11] For instance, the requirement to submit a financial offer with the bid under reasonable terms for the commitment and availability of finance.

[12] “Temerity” refers to an offer made on terms that might be considered reckless, in the hope of winning the project and subsequently being able to negotiate a more favourable outcome. In some jurisdictions ( for example, in Spain), it is customary to establish a threshold of temerity in relative terms. For example, any offer that is below the average bid by more than 15 percent will be considered too aggressive for the purpose of evaluation. According to Spanish legislation, the authority may give the bidder the opportunity to explain and argue the rationale of that offer, and additional security may be required by the authority to ensure the availability of funds.

Add a comment